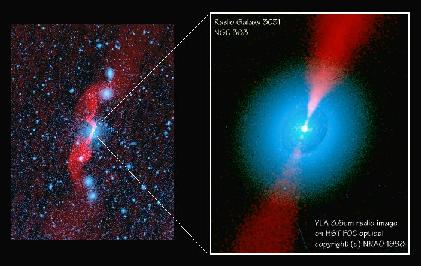

FIGURE

FIGURE Light consists of alternating electric and magnetic fields moving through space at the speed of light. The distance between the peaks is the wavelength of the light.

At the end of this section you should understand the following:

Review questions on light:

Basic Properties of Light

Light is very important to astronomy, and for obvious reasons. Astronomers are not able to go out and sample the objects of their study, namely stars and galaxies (an exception can be made for the study the solar system). All they can do is sit here on Earth and observe the light which comes from those distant objects. Fortunately, that light contains a huge amount of information. But to interpret that information we have to understand what light is, how it is generated and absorbed, and how it propagates through space. Light was a mystery to early scientists. What is it? What is it composed of? Well, let us first observe that light is something we call energy that is radiated through space; hence, light is radiant energy. We often think of different kinds of light as if they were completely different things: Heat radiation, visible light, tanning rays, X-rays. But these are all different manifestations of light.

Since light is energy radiating through space we next need to consider whether it travels instantaneously or at some finite speed. Early attempts to measure the speed of light were not successful, basically because the speed of light, as we now know, is very large. The speed of light is designated by the character c , and it is about equal to 300,000 kilometers per sec (km/s). The finite speed of light was demonstrated for the first time by astronomical means, specifically by noting the observed time lags in the orbits of Jupiter's moon when Jupiter was further away from the Earth.

Is light a particle or some sort of a "wave" in some unknown substance? Isaac Newton thought that light had to be composed of particles, in part because a light beam projected through a hole didn't spread out through space like sound waves or water waves would. However, in 1803 Thomas Young passed light through two narrow slits and produced a wave interference pattern of alternating dark and light bands, a pattern that is created only by waves. This seemed to settle the matter, although, in fact, light is both a particle and a wave. Let us see how these two natures manifest themselves.

The most significant breakthrough into the nature of light was achieved by James Maxwell. Maxwell was studying the behavior of electric and magnetic fields. He found that a time-varying electric field could generate a magnetic field and vice versa. He was able to put together a set of equations that described this behavior. What he found from his equations was that alternating electric and magnetic fields traveled through space at a speed that was equal to the speed of light. Maxwell's great leap of intuition was to conclude that light was composed of the same things that made up electric and magnetic waves. Indeed we now refer to light as electromagnetic radiation.

Can we make the idea of electromagnetic fields a little more familiar? Most people are acquainted with magnets and hence magnetic fields. Electric fields are produced by things with electric charge. Both electric and magnetic fields can produce forces that attract and forces that repel. What Maxwell showed was that a field that is changing in intensity with time will generate the other kind of field. This is, for example, how electric generators work: spinning magnets create electric fields which drive electric currents. Now in the case of light, one has the magnetic and electric fields without the magnets or the individual electrical charges. The fields are constantly fluctuating, generating each other and propagating through space. The fields move through space at the speed of light. Electric and magnetic fields are types of energy, and they can propagate through space, hence light is energy radiating through space.

FIGURE

FIGURE

Light consists of alternating electric and magnetic fields moving

through space at the speed of light. The distance between the peaks is

the wavelength of the light.

We speak of light as a wave phenomenon; if you like, think of light as waves of electric and magnetic field moving through space. Other waves you might be familiar with include sound waves (which move at the speed of sound) and water waves (which too have a wave speed). Light and all waves are characterized by their wavelength which is the distance from one wavecrest to the next. Wavelength is generally designated with the Greek letter lambda and is measured in units of distance. Frequency is the other measure of a wave, and it is the number of waves that pass a given point per second. Frequency is designated by the Greek letter and is in units of inverse time (e.g., ``cycles per second''). A cycle per second is the unit of frequency and this is known as a ``Hertz.'' Since all light waves travel at the speed of light one can see that wavelength and frequency must be related to each other, for if waves of light of a certain wavelength are going past you at the speed c you will see a number individual wavecrests go by in one second that is given by the speed of light divided by the wavelength. Hence for light the frequency and wavelength are related by the formula:

(Frequency) x (wavelength) = (speed of light)

Although we tend to think of light as just the visible white light that we see with, light comes in a complete spectrum of types, the electromagnetic spectrum. Each "flavor" of light is characterized by a particular wavelength. The visible spectrum is composed of light of different colors (the rainbow colors) and the wavelengths of this light ranges from about 700 nanometers to 400 nanometers. The long wavelength (lower frequency) end is the red light and the short wavelength (higher frequency) end is the blue light. In traveling through a prism, light is bent by the glass but the amount of bending depends on the light's wavelength. This is what spreads the white light out into the separate colors. (The same thing produces a rainbow with the raindrops acting like tiny prisms). But there are many more flavors of light than just the visible spectrum. To the long wavelength side (beyond red) is the infrared (which you know as heat radiation), microwaves, and radio waves. FM radio has a higher frequency than AM radio for example. At wavelengths shorter than violet we have the ultraviolet (sunburning rays) and then X-rays and then gamma rays. The later examples give some hint that somehow the energy of the given type of light is also tied in to its wavelength since at least in terms of biological damage X-rays are worse than UV, and gamma rays are the worst of all. And indeed, the shorter the wavelength (or higher the frequency), the greater the energy of the light.

FIGURE

Different wavelengths of the fluctuating electromagnetic waves

correspond to different types of light in the electromagnetic spectrum.

The visible portion of the spectrum is a small region from about 400 to

700 nanometers (billionths of a meter). Different astronomical objects

emit most of their radiation in different parts of the spectrum.

Today's astronomer studies the sky in all wavelengths.

We have been speaking of light as a wave and indeed it is. But modern physics has shown that light also acts like a particle because it comes in minimum-sized packets, individual "particles" of light which are called photons. In particular, it was Einstein's explanation of the photoelectric effect that introduced the photon to modern physics. For some purposes it is best to think of light as a wave, but for others it is best to think of it as a bunch of little photon particles. This particular nature of light becomes most important when discussing matter emitting or absorbing light since it can do so only in these discrete amounts. Specifically the energy of each photon of light of a given frequency is expressed by Planck's law:

(Energy) = hc / (wavelength)

where h is a number called Planck's constant. Planck's constant is a very tiny number (which means that each individual photon carries a very small amount of energy). But Planck's law expresses the fact that a photon of ultraviolet light is much more energetic than a photon of red light. For that matter blue light is much more energetic than red and this has an effect in photography. Photography works by having photons pass through a camera and land on a film that has chemicals that undergo changes when they absorb a photon of a given wavelength. In color photography the chemicals have to react only to a narrow range of photon wavelengths. Because red photons carry less energy per photon it is harder to get them to react with chemicals, making it more of a challenge to get a good color film that does reds well. As another example, a gamma ray photon can really do damage to biological cells by wrecking the molecules that they smash into. Visible light photons however do not have the energy to smash apart those molecules. This is something you already know: gamma rays and X-rays are dangerous to biological organisms (e.g. you).

You may protest that light has to be one or the other, either a wave or a particle. But light is whatever it is; we are describing what its observed behavior is like. Under many circumstances light behaves like a wave and has observed wavelike properties such as wavelength and frequency. When it is absorbed or emitted it behaves like a particle in that the "amount" of light is always in discrete units we call "photons." One uses whatever picture, wave or particle, that best describes the observed phenomenon. (Remember the parable about the blind men and the elephant.)

The Emission of Light

We now know something about the general nature and property of light. But how does light get produced? Specifically we must consider the subject of the emission of light from objects (and its inverse, the absorption of light by objects). The whole subject of the emission, absorption, and interaction of light with matter is pretty complicated. We will focus on a few specific processes that are very important for astronomy.

The first kind of light emission we will consider is the so-called ``thermal emission'' or blackbody radiation. A blackbody is defined to be a perfect thermal emitter---it would be black because an ideal emitter would also be an ideal absorber. The radiation given off by a blackbody is determined solely by its temperature. If you were to shine a light onto a blackbody it would absorb the photons and then reradiate their energy as light appropriate to the temperature of the blackbody.

How does a blackbody work? As an approximate picture of what is going on think of the atoms and molecules that make up all matter. They are in a constant state of motion, a thermal (related to heat or temperature) motion; that is, they are "jiggling" or vibrating about. The amount of motion is characterized by an object's temperature: the higher the temperature, the faster the molecules and atoms move about. Now recall that light is changing electromagnetic fields. The electrons and nucleons that compose atoms are charged and if they are moving they might generate varying electric and magnetic fields. The faster they move, the more rapid the change in the fields and hence the higher the frequency of light. Although the actual situation is rather more complicated, this provides a rationale for the basic fact of thermal radiation: the higher an objects temperature, the higher the frequency of the light it emits (hotter equals bluer).

As an experiment, go find an electric range. Turn it on low and wait for a few moments then place your hand over the heating element. You can feel heat radiation coming up from the unit. This is infrared radiation being emitted from the coil. Your skin acts as an infrared detector. If you turn the heat up eventually coil begins to glow dull red (take your hand away!). Now in addition to the infrared radiation some noticeable amount of red light is being emitted. If you turn it up further the coil glows orange as more visible light is emitted. You also know that the temperature of the coil has increased throughout this experiment. One might imagine that if there were even higher settings on the stove you could get a white hot burner. In principle you could imagine turning the temperature up until you got mostly gamma rays (this would be a temperature in excess of ten million degrees). Hence the higher the temperature of a blackbody emitter, the higher the frequency (or shorter the wavelength) of the predominant light it emits. The relation between temperature and the dominant wavelength of the light emitted by a blackbody is called Wien's law. It is expressed as

(Peak Wavelength in centimeters) = 0.29/T.

Note that the wavelength is measured in centimeters and the temperature as degrees Kelvin. Because of the inverse relation to the temperature one sees that as the temperature goes up the peak wavelength goes down.

Now thermal radiation is not emitted only at the peak wavelength. There is a complete spectrum of radiation emitted; it simply has a peak at the wavelength given by Wien's law. The sun is nearly a blackbody thermal emitter with a temperature of about 5800 Kelvin. This corresponds to a peak wavelength of 500 nanometers which is right about at green (right in the middle of the visible band of light; coincidence or what?).

As a blackbody sits and emits radiation it is radiating away photons, and hence energy. In fact a blackbody is trying to come into temperature equilibrium with its surroundings. If it absorbs more photons of higher energy, then it heats up. If it emits more than it absorbs, then it cools down. The sun is sitting in space with a surface temperature of 5800K radiating photons into space (which has a temperature of about 3K). So the sun is trying to cool down by the emission of light. The energy carried off by the light is replaced by nuclear reactions within the sun.

It is relatively simple to characterize the amount of energy a blackbody gives off. This is important in trying to compute the total energy emitted by the sun, that is, its luminosity. If you add up all the light emitted by a blackbody then you would get the total energy flux carried by that light. There is a specific formula that describes this energy flux, and that is the Stefan-Boltzmann law, which states that the total energy per second per unit emitting surface area in light from a blackbody is proportional to the temperature to the fourth power:

(Flux of Energy) = sigma T4.

Sigma is a constant called the Stefan-Boltzmann constant. Because the energy flux goes as the temperature to the forth power, a hotter blackbody gives off a lot more energy than a cooler blackbody. For example, if star A is the same size as star B but twice as hot at the surface, it is giving off 16 times as much blackbody radiation energy as B.

Flux is energy per unit area, so a computation of the total energy given off by a star requires knowing how big the star is (which is why in the above example we said that stars A and B had the same size). As an example we can write that the total energy per second from the blackbody radiation of the sun is equal to the sun's surface area times the Stefan-Boltzmann law:

L = 4 pi R2 sigma T4

where R is the radius of the sun, L is the luminosity of the sun, and T is the temperature of the sun. So redoing our example from above, now assume that star A has half the radius of star B and twice the temperature. How do their luminosities compare? Because the ratio of their radii is 1/2 and radius is squared in the formula, shrinking a star by 1/2 reduces its luminosity by a factor of 4. But doubling the temperature increases the flux by a factor of 16. The combination of the two effects now makes star A 4 times as luminous as star B.

The Stefan-Boltzmann law potentially gives us a way of determining the radius of star. We can determine the temperature by means of the peak in the blackbody spectrum. Next we can measure the amount of energy received here at the Earth. This is some small fraction of the total energy emitted, i.e., the luminosity of the star. The light of the star spreads out through space over a larger and larger area, specifically, at a distance R from the star, the area of a sphere of radius R, i.e., 4 pi R2. If we capture some small amount of the star's light in a telescope, the ratio of the area of the telescope to the area of that huge sphere will be the ratio of the amount of light we capture compared to the total amount of light emitted. Thus if we know the distance to the star we know its luminosity. Using the Stefan-Boltzmann law we can then compute the radius.

FIGURE

Light from a source spreads out in space. The further from the source

the less light per unit area there will be (the source is not as

bright). The brightness drops off as one over the distance squared.

This is called the inverse square law.

Note that high luminosity stars that have low temperature must, therefore, be quite big. Red Giant stars are an example of a low surface temperature star that have huge sizes (hence the name "Red Giant").

Up to now we have been concerned with thermal radiation. The thermal emission spectrum is called a continuum spectrum because light is emitted over a continuous region of the spectrum. The light from a blackbody is the sum of all the photons emitted from all the molecules and atoms packed together into a solid body. But what if you were to look at the light coming from an individual atom? Then what you would see would be a bit different. Most people have some picture of an atom consisting of a nucleus surrounded by orbiting electrons (like a small solar system). However, the electrons have to be in certain orbits, they can't be in just any orbit (this is a "quantum mechanics" effect and is related to the fact that energy, i.e. photons, comes in discrete packets). When an electron jumps between orbits in an atom a discrete photon of light is emitted or absorbed. (See Figure 4-16 of the text.) The light is emitted if the electron jumps to a lower energy orbit (moves closer to the atom) and light is absorbed if the electron moves to a higher energy orbit: if you like, the photon comes in and knocks the electron up a notch. The difference of the energies of the two orbits is equal to the energy of the photon. This means that the light emitted or absorbed has a definite wavelength and hence color. The exact energy of the electron orbits depends on the particular atom the electron attached to, hence different atoms emit or absorb different wavelengths of light. These wavelengths are known as emission lines, or for absorption absorption lines. By observing specific emission or absorption lines one can learn that certain elements are present. This means one can analyze the contents of, say, a cloud of interstellar gas solely from the study of the light emitted or absorbed by that cloud.

Now we come to some basic statements about the various spectra of light that one can observe. We have discussed three types: the continuous spectrum such as given off by a black body, e.g., the sun, an emission spectrum, when atoms emit discrete colors of light, and an absorption spectrum, when individual atoms absorb discrete colors of light. Kirchhoff's Laws summarize the statements about these spectra:

Refer to Figure 4-18 in your textbook for a picture of Kirchoff's laws in action. What is the physical basis for these laws? Emission of light occurs in atoms at specific wavelengths due to the nature of the atomic structure (the electrons in orbit). So if one had a big dense star composed entirely of hydrogen, why don't you just see the hydrogen lines? There are lots of ways that the photons can interact in a dense gas to change their wavelengths (scattering for example). All these complicated interactions (including the emission of light from free electrons interacting with the hydrogen nuclei) produce photons with all sorts of wavelengths. Hence a continuum (thermal or blackbody) spectrum is produced. If the gas is rarefied, then the photon emitted from an individual atom will be able to escape from the gas without being altered and you will see the appropriate emission lines (Law 2). The gas needs to be kept warm so there will be a source of energy to permit the emission of light. The temperature required depends on the emission lines that are going to be produced. Higher energy photons require higher temperatures. So a thin hydrogen cloud heated by a nearby star will produce hydrogen emission lines, but a hydrogen cloud that is cold, sitting alone in interstellar space, will not produce those lines. Law 3 is a bit harder to understand at first. Why does looking at a continuum source through a cloud of gas produce an absorption spectrum? Photons from the continuum source go into the cloud and the atoms absorb precisely those photons that match up with their atomic energy levels. But that same gas also emits light at those energy levels so shouldn't it all end up making no difference? The difference is that the emission photons are reradiated out in all directions, not just back into your line of sight. The net effect, therefore, is the removal of most of the photons at the absorption line energies from the light which is traveling along your line of sight. Hence, the absorption spectrum.

In the case of the sun, one sees the continuum spectrum coming from the solar surface, or photosphere, with absorption lines produced by gas in the surrounding solar atmosphere. If one looks off the face of the sun at the gas in the surrounding hot corona, the extended atmosphere of the sun, one can see emission lines from hot thin gas.

Thus it is that by observing emission and absorption spectra an astronomer can determine the chemical composition of clouds of gas in space. Other things besides atoms have specific absorption and emission properties. For example, molecules, which consist of atoms bound together (such as water which is two hydrogens bound to an oxygen) have energy levels which can emit and absorb specific wavelengths of light. The situation is analogous to the atom but a bit more complicated. The emission (absorption) lines can be closely packed together yielding emission (absorption) bands. Water molecules have an absorption band in the microwave region and this is exploited by the microwave oven. Microwave photons are absorbed by the water causing the water to increase its energy level (heating it).

If we look at the spectrum of the sun we find emission and absorption lines caused by the presence of specific elements. The strength of a given line depends on several factors: the amount of the element, the temperature and pressure of the gas, atomic physics, and the possible presence of magnetic or electric fields. The types of lines that atoms give off under a particular set of circumstances can be determined to a large extent by experiments in the laboratory. Then when we see those lines in the sun we learn something about the sun's composition. We have learned from spectral data that the sun consists of about 76% Hydrogen (H), 22% Helium (He) and 2% everything else. This is fairly typical for the universe as a whole. As an interesting historical sidenote, Helium was first discovered as unknown emission spectral lines in the sun's corona.

We have discussed now how light can permit one to measure the temperature of stars and planets and learn of their compositions. One can also measure motion toward or away from us by using the doppler shift. If an object is approaching you, the light waves are "crunched up" a bit, i.e., the wavelength of the light is reduced which means that the light is shifted towards the blue. If the source of light is moving away from you then the wavelength of the light is stretched out, i.e., the light is shifted towards the red. These effects, individually called the blueshift, and the redshift are together known as doppler shifts. The shift in the wavelength is given by a simple formula

(Observed wavelength - Rest wavelength)/(Rest wavelength) = (v/c)

Put another way, this just states that the percent change is equal to the velocity of the emitter as a fraction of the speed of light. The rest wavelength is what you would observe from an object at rest with respect to you, and v is the velocity of the object emitting the shifted light. Note that the velocity is positive (away from you) if the shifted wavelength is longer than the original rest wavelength. A note of caution: this formula is appropriate only for velocities significantly less than the speed of light. There is another formula for speeds close to the speed of light, but we won't be using it in this course. The effect (i.e., redshift or blueshift) is qualitatively the same even at these high speeds however. Also note that the speed determined by the doppler shift is only the radial velocity that is the velocity toward or away from you. Transverse motions do not produce a doppler shift in this way. Thus the doppler shift only gives us part of the motion of a given star, namely that motion towards us or away from us. Motion across the sky can only be determined with slow, painstaking observations over very long periods of time (this is called the proper motion of a star).

In the case of the sun, its surface is boiling and seething, and the

sun itself is pulsing from energy produced in its interior. The

velocities of these motions can be directly measured using doppler

shifts. Since the frequency of these pulses, and the way they appear on

the surface depend on the properties of the gas in the solar interior,

careful doppler studies allow us to probe into the interior structure

of the sun.

In this section we will be considering the Sun as a prototypical star. We will be most concerned with understanding the basic properties of the Sun, its interior structure, how astronomers have learned about the Sun. We will not be focusing on how the Sun interacts with the solar system, its effect on the Earth, etc.

Here are some learning goals for the study of the Sun:

Here are some review questions on the Sun:

In this course, Astronomy

124, we will be learning about

the contents of the universe, from the relatively small scales of a

single star system up to the largest distances known, namely the entire

visible universe. Along the way we will encounter various types of

galaxies, clusters of galaxies, clusters of stars, interstellar gas and

dust, neutron stars and black holes, and, of course, individual stars.

The stars, in fact, are a very basic constituent of the universe so it

is important to get a good grasp on the properties of them. The most

familiar of all the stars is our Sun. Many of the properties of the Sun

will be common to all stars, so we will begin with it.

In this course, Astronomy

124, we will be learning about

the contents of the universe, from the relatively small scales of a

single star system up to the largest distances known, namely the entire

visible universe. Along the way we will encounter various types of

galaxies, clusters of galaxies, clusters of stars, interstellar gas and

dust, neutron stars and black holes, and, of course, individual stars.

The stars, in fact, are a very basic constituent of the universe so it

is important to get a good grasp on the properties of them. The most

familiar of all the stars is our Sun. Many of the properties of the Sun

will be common to all stars, so we will begin with it.

What are the most obvious properties of the Sun? It's hot and bright. A slightly more subtle point was recognized by ancient astronomers who determined that the Sun moves through the sky on a daily and annual cycles, and that these cycles account for the length of the day and the seasons of the year. Many ancient structures and monuments (for example, Stonehenge) are believed to be designed to keep track of these sky motions. With the coming of the Renaissance and the work of Copernicus, Galileo, and Kepler it was realized that the observed motions of the Sun were not a property of the Sun itself, but of the rotation of the Earth and its orbital motion around the Sun. So what are we left with? It's hot and gives off light. Let's try to learn something more.

What is there to know about the Sun? How about its size, mass, composition, internal structure, temperature, source of its heat and light, age, and life history? Pause for a moment and reflect upon the general issue of how one would set about to learn these things. You can't travel to the Sun. You can't get pieces of it to examine. All you can do is observe it, and perform experiments on Earth to help you learn about the laws of nature. With a little thought you might be able to relate the results of your physics experiments back to what you are observing in the heavens. This is the way it is with astronomy.

We begin with a rather straightforward property of the Sun, namely its size and the distance to it. Note, however, that determining even something as simple as the size of the Sun is not so straightforward. You can see the Sun but just how far away is it? If you know the distance to the Sun then the apparent size of the Sun directly gives you its diameter. Alternately if you knew how big the Sun was you would know how far away it was. But you know neither. In astronomy it is customary to express the apparent size of something in terms of the angle that the Sun's image takes up on the sky. (Apparent size is just how big something looks; it is an observed quantity, not an intrinsic property. The task of the astronomer is to move from what is observed, that is how things appear to us, to the general property, how things really are.)

What are the units of angle? Recall that the total angular measure around a circle is 360 degrees. The angle from (say) the eastern to western horizon (through the point directly overhead, the zenith) is 180 degrees. The Sun, as it turns out, has an apparent diameter of about 32 arc minutes (a minute is equal to 1/60th of a degree; a second of an arc is equal to 1/60th of a minute). Another unit of angle is the radian which is the angle that gives an arc of a circle equal to the radius. The advantage of using the radian as the unit of measure is that you can convert directly from angular size to a relationship between size of the object and the distance to it: Specifically, the diameter of an object divided by the distance to it is equal to its apparent size in radians.

FIGURE:

Units of angular measure. The radian is a unit of measure which gives

an arc on a circle equal to the radius of the circle. It is about 57.3

degrees in size.

FIGURE:

Units of angular measure. The radian is a unit of measure which gives

an arc on a circle equal to the radius of the circle. It is about 57.3

degrees in size.

Now, given that, one can use the rules of trigonometry to determine the diameter of the Sun if one knows the distance, or one could determine the distance if one knew the diameter. But how does one determine a distance? In the case of the Sun it was done in the same manner that we determine distances here on the Earth: by the method of triangulation. It is of some historical interest to note that the size of the solar system was first measured by observing the planet Venus crossing over the face of the Sun (in 1761 and 1769) from several locations on the Earth and triangulating to obtain the distance from the Earth to Venus. (The observing expedition to Tahiti was led by Captain James Cook.) Anyway, the average distance from the Earth to the Sun is 150,000,000 kilometers (1.5 × 108). The angular diameter of the Sun is 32 arc minutes which is equal to about .009 radians of angle which means that the Sun is about 1.4 × 107 kilometers in diameter (about 110 times that of the Earth). Since in astronomy we most often refer to the radius of stars and planets, and we use the units of centimeters (cm), we shall note that the radius of the Sun is 7 × 1010 cm.

FIGURE: The diameter of the Sun and its distance

away from us are related by the apparent angular size of the Sun. The

ratio of the diameter to the distance is equal to the size of the angle

in radians.

FIGURE: The diameter of the Sun and its distance

away from us are related by the apparent angular size of the Sun. The

ratio of the diameter to the distance is equal to the size of the angle

in radians.

Next consider the Sun's mass. Historically it was a major advancement to realize that there was such a property as mass and what it meant to have a certain mass. On Earth we think of mass as the amount of "stuff" something has, or how much it "weighs." Obviously we can't take the Sun and put it on a balance as we might do if we were measuring the mass of some rock. What other properties does mass have? Newton is the scientist credited with the major discovery that mass implies a gravitational attraction between objects with mass, that is, they exert a pulling force on each other. Newton worked this out quantitatively and he determined the mathematical relationship

Fgravity = G M m / R2

describes the force of gravity between two objects of mass M and m and separated by a distance R (the term G is a constant that relates the units of mass and distance to those of force). Note that Newton's Law of gravity tells us that the gravitational force between two objects rapidly becomes weaker as those objects become further apart. It also tells us that the force of gravity is stronger in direct proportion to the mass M.

Hence we measure the Sun's mass by its gravity. The Earth is maintained in its orbit around the Sun by the gravitational attraction of the Sun. Newton discovered that if there are two bodies with mass m1 and m2 orbiting each other, then the size of the orbit (given by a) is related to the period of the orbit (specified as P) by the following formula:

P2 G (m1+m2) = 4 pi2 a3

The general relationship that the orbital period squared is proportional to the orbital radius cubed is originally due to Kepler and is known as Kepler's Third law. Throughout this semester we will be concerned with determining the mass of things, from other stars, to black holes, to whole galaxies and even the whole universe. The only way we have to measure mass of such objects is through the gravitational force that they exert on other objects. (Note: this material is covered in more depth in Astronomy 121, and is discussed in Chapter 2 of your text. We introduce it here simply to show you how the mass of the Sun can be determined, and by extension the mass of stars in orbiting binary star systems. Indeed, forms of this law allow us to estimate the masses of entire galaxies. So this is an important concept although we must leave out the more extensive discussion of Newton's laws which would permit a more thorough understanding of the derivation of Kepler's law.)

Using Kepler's law, the period of the Earth's orbit (1 year), the size of the orbit (the distance to the Sun given above) and a value for the constant G in the equation permits us to obtain, through simple algebraic manipulation, the value of m1 + m2, the combined mass of the Earth and the Sun. This will be very nearly equal to the mass of the Sun since the mass of the Earth is completely insignificant. (If you were weighing an elephant by more or less conventional means then the presence of a few dust specks on his back would hardly throw the measurement off by a significant amount.) As it turns out the mass of the Sun is just about 2 × 1033 grams (gm). For comparison, you weigh about 5 × 104 gm.

(The mass of the Earth can be similarly determined by the orbits of satellites around it, or, more simply from the acceleration due to gravity at the Earth's surface. What was not known for a long time was the value of the constant of proportionality in Newton's law, namely G. This was determined by Henry Cavendish in the 1790s by measuring the attractive force between two objects of known mass. As you should imagine, this required a very sensitive experiment because the force of gravity is very weak--G is very small. This experiment has sometimes been referred to as "weighing the Earth" but it might better be referred to as "weighing the universe" because it was the final key piece needed to use Kepler's orbital equation to determine the masses of the Earth, the Sun, indeed the whole universe.)

Given the mass of the Sun and its size we can compute its density. The density of something is equal to its mass divided by its volume. Specifically, for a sphere we have

rho = M / ( 4/3 pi R3)

where the Greek rho is our symbol for density and the R is the radius of the sphere. If you plug in the numbers for the Sun you get a density of 1.4 gm/cm3. For comparison, water has a density of 1 gm cm3, and rock around 3. So the average density of the Sun is not all that different from you. (You are about equal to water as evidenced by the fact that the human body is nearly neutrally buoyant in water; you float but not well enough to keep your nose out of the water. Does it surprise you that the Sun is about as dense as water? Did you think it might be denser because it's big, or less dense because it's floating in space? Beware of casual thinking and naive expectations in astronomy!)

Now let's return to the properties of the Sun that we first mentioned: heat and light. Why is the Sun giving off heat and light? The answer for the ancients was in terms of a familiar phenomenon: the Sun must be on fire. A more appropriate analogy for today's more sophisticated student might be the filament of a light bulb (so long as you don't conclude that the Sun is powered by electricity.) The point is that you already have experienced the fact that sufficiently hot things give off visible light. The Sun, like all stars, is just a hot ball of gas that is giving off blackbody radiation. Let's examine how the Sun is hot and why.

Visible Parts of the Sun

When we look at the Sun the surface that we see is called the photosphere. We can only see down into the Sun until the opacity is large enough to scatter the light. The deeper that one looks the higher the temperature. This accounts for the phenomenon known as limb darkening, which refers to the fact that the Sun appears darker out on its edge. This is because out at the edge you have to look through a thicker layer of solar atmosphere (because you are looking slantwise through it) when compared with the center of the Sun. You see mainly the higher layers of the photosphere where the temperature is lower, at about 4000K. At the center you are looking deep into the photosphere and the temperature is higher and consequently the Sun appears much brighter. (The same sort of thing happens when you look at the stars in the sky; stars overhead are less obscured than stars on the horizon because the stars on the horizon have had to pass through a greater column of the Earth's atmosphere and so are subjected to more scattering of their light.)

The surface of the Sun has a mottled appearance called "granulation." This represents the upper end of the convection cells that are in the outer layers of the Sun. Convection is a process by which heat is transported from hot to cool regions through the physical motion of the gas. Here the convection cells are places where hot gas boils up from deeper within the Sun. The center of a granual, or convection cell, is where the rising hot gas is. Since the gas has higher temperature it is brighter than the region surrounding the cell where cool gas is sinking.

Another sort of mottling that one can see in the Sun are the sunspots. Sunspots appear dark because they are at a much lower temperature than the surrounding photosphere, specifically about 1500K cooler. Recall that the energy flux in a blackbody goes like temperature to the fourth power, so a small change in temperature amounts to a substantial reduction in the emitted flux or brightness. Hence sunspots are dark. Sunspots are believed to be caused by powerful magnetic fields poking up through the Sun's surface. Sunspots follow something known as the solar cycle so that there are periods of maximum sunspot activity every 11 years. The causes of the sunspot cycle are not well understood.

Above the photosphere lies a region called the chromosphere. It has this name because this layer of the Sun's atmosphere can be seen during the last moments before totality in a solar eclipse as a pinkish layer (hence color, or chromo). The pink is due to the red emission line of hydrogen (the so-called "Balmer" line). The chromosphere is about 2000 km thick. Although it has a lower density than the photosphere, the chromosphere has a higher temperature. Indeed from the base to the top of the chromosphere the temperature increases to about 100,000 degrees. The chromosphere also features spike-like jets of gas called spicules that can stick up as much as 10,000 km above the photosphere.

Finally the outermost layer of the Sun is the corona, a region of diffuse glowing gas which can only be seen from Earth when the much brighter glare from the Sun is blocked by a solar eclipse. (The corona can be studied all the time by satellites in space through the use of an obscuring disk to block out the Sun and make an artificial eclipse.) The corona extends for as much as a million km around the Sun and has temperatures as high as two million degrees. In fact some regions of the corona are sufficiently hot that they give off X-rays. The corona interacts with many of the more dynamic aspects of the solar atmosphere. Examples include prominences, which are great arcs of gas that extend outwards from the Sun, and solar flares which are great explosions and jets of gas from the solar surface. All these contribute to solar activity which is tied in somehow with the sunspot cycle.

The Solar Interior

The only visible layers of the Sun are the photosphere and the solar atmosphere. How is it possible for astronomers to develop an understanding for the nature of the interior of the Sun? They do it by using the known laws of physics and the limited amount of information that we have measured directly from the Sun, namely its mass, radius, composition, surface temperature, and luminosity.

Two more observations help us with our effort to model the Sun. The first is that the Sun appears to have a constant radius; it is neither expanding nor contracting. Since the Sun is a ball of hot gas wouldn't you expect it to expand outward into space? Yes, if there were no other force acting on that gas. The other force is the force of gravity which is pulling the gas together. The Sun is in a state of balance between the pressure forces of the gas and the gravitational force due to the Sun's mass. This balance is called hydrostatic equilibrium. Now pressure is a force per unit area; you exert pressure on a wall if you put your hand on it and push. That pressure force is exerted only over the surface area covered by your hand. Pressure produces a net force, which in turn means a net acceleration (from Newton's laws of motion) only if it is not in balance with some other force. Isometric exercises are an example of force per unit area in balance. Another example is provided by differences in water or air pressure. At great depths the pressure of water would crush a scuba diver's lungs if they contained only the air pressure corresponding to the surface. Scuba gear provides air at the same pressure as the surrounding water, which allows one to breathe. The force of the interior air pressure plus the forces exerted by the walls of the lungs are in balance with the sea water pressure. There is no net force on the lungs. Now back to the Sun. Gravity is pulling the mass of the Sun downward. In order for pressure to counterbalance that force the pressure at the center of the Sun must be very large and decreasing as one goes outward through the Sun. So the concept of hydrostatic equilibrium allows us to predict immediately that the Sun has a high pressure center.

The next fact that we will use is that the Sun is radiating away a great deal of luminosity but remains at a constant temperature and constant luminosity. Since energy is flowing out from the Sun and it is not cooling off, energy must be generated somewhere inside the Sun. Since the Sun is not heating up that energy generation rate must be equal to the Sun's luminosity. This is the concept of thermal equilibrium.

The source of the Sun's energy was a mystery for many years. Evidence found on the Earth suggested (what was then regarded as) a tremendously old age for the Earth. Fossil algae and bacteria were dated at ages in excess of a billion years. This meant that the Sun must have been burning much as it is today for at least as long. If the Sun were burning by chemical reactions it wouldn't last more than a few thousand years. The most reasonable (incorrect) answer was that the Sun's luminosity came from gravitational contraction. If the Sun were slightly out of balance so that gravity was pulling it into a smaller and smaller ball, then the gas composing of the Sun would be compressed to ever increasing densities. When you compress a gas it heats up. For the Sun that heat could radiate away as the Sun's luminosity. However, the entire energy available from such a gravitational energy would power the Sun for only 30 million years. This is a lot longer than chemical reactions but still too short for the age of the Earth.

The correct answer was arrived at in the twentieth century. The first step was the development of Einstein's theory of relativity which showed the equivalence of mass and energy (as generally stated in his famous equation E=mc2). Given this equivalence one can then imagine that it might be possible to convert mass directly to energy (through some means). For example, given that the mass of the Sun is 2 x 1033 grams and the speed of light is 3 × 1010 cm/sec, then we find that the equivalent energy for the entire mass of the Sun is 1.8 × 1054 ergs (= gm (cm/sec)2). Since the Sun's luminosity is 4 × 1033 ergs/sec this gives a possible lifetime of 4.5 × 1020 sec, which is 14 thousand billion years. This at least allows the possibility of having a Sun as old as the Earth!

In fact the Sun is not converting all its mass directly into energy. The only known mechanism to convert mass completely into energy is through matter/antimatter annihilation and this is ruled out because the Sun is composed entirely of matter. Instead the Sun uses the mechanism of nuclear fusion wherein atoms of a light element (in this case hydrogen) are joined together to form a heavier element (helium). In the Sun the predominate energy generation mechanism is the fusion of four hydrogen atoms into one helium atom. In this process a small amount of the total mass (0.7%) is converted into energy.

The process of nuclear fusion is related to but

different from the process of nuclear fission

wherein large atoms are broken apart and the resulting pieces have less

mass than the original atom. Nuclear fission occurs (for example) when

uranium atoms split apart. Nuclear fission powers nuclear reactors and

atom bombs. Controlled nuclear fusion reactors do not exist at this

time although they remain the subject of considerable research.

Uncontrolled nuclear fusion reactors do exist: they are called H-bombs,

or thermonuclear weapons. To give you some idea of the power of E=mc2

if we consider your basic 10 megaton nuclear explosion (pictured), the

energy given off (10 megatons) represents the energy found in 47 grams

of matter (about one and a two thirds ounces). This would be produced

through the fusion of just under 7 kilograms of hydrogen.

The process of nuclear fusion is related to but

different from the process of nuclear fission

wherein large atoms are broken apart and the resulting pieces have less

mass than the original atom. Nuclear fission occurs (for example) when

uranium atoms split apart. Nuclear fission powers nuclear reactors and

atom bombs. Controlled nuclear fusion reactors do not exist at this

time although they remain the subject of considerable research.

Uncontrolled nuclear fusion reactors do exist: they are called H-bombs,

or thermonuclear weapons. To give you some idea of the power of E=mc2

if we consider your basic 10 megaton nuclear explosion (pictured), the

energy given off (10 megatons) represents the energy found in 47 grams

of matter (about one and a two thirds ounces). This would be produced

through the fusion of just under 7 kilograms of hydrogen.

Although it would perhaps be better if humanity left fusion to the Sun, considerable research into it has been done (as you might suspect), and this has coincidentally led to a better understanding of the interior of the Sun and other stars. The main point is that in order to force atoms to fuse into new atoms one needs to overcome the electrical forces of the nucleus. Atomic nuclei have positive charges from the protons in them and since like charges repel each other, it is difficult to force two protons together. Doing so requires considerable energy, that is high temperatures. On Earth in order to get H-bombs to work one has to set off an A-bomb to generate the millions of degrees of temperature necessary for fusion (such A-bombs are euphemistically known as "triggers.") In the Sun, such temperatures come naturally but only at the Sun's core. Hence we expect that the energy generation process in the Sun takes place only in its very center. The temperatures in the center of the Sun are in excess of 10 million degrees and the densities go as high as 160 gm/cc.

The fusion process that the Sun uses is known as hydrogen burning by the proton-proton chain because it depends on a reaction that combines two protons into one deuterium atom. One proton is the nucleus of a hydrogen atom. A deuterium atom is "heavy hydrogen" which is composed of one proton and one neutron. The reaction is

1H + 1H ---> 2H + e+ + neutrino

where the symbols stand for Hydrogen with one proton (1H), hydrogen with a proton and a neutron (2H), also known as deuterium, a positron (e+) which is the positively charged antimatter form of an electron, and a neutrino. This reaction proceeds at a rather slow pace; it depends on turning a proton into a neutron and this is a reaction with a very slow rate (it involves the so-called weak nuclear force). The positron annihilates with an electron producing some energy, and the neutrino escapes from the Sun with no further interaction. Neutrinos have a very difficult time interacting with anything so in the Sun the neutrinos generated by nuclear reactions represent an energy loss. Anyway, as the deuterium atoms are produced they can react with protons (ordinary hydrogen) to produce helium 3 which is two protons and one neutron, thusly

1H + 2H ---> 3He + gamma ray

Energy is carried off by the gamma ray photon. When enough Helium 3 atoms have accumulated they can combine to form one Helium 4 (which is what we regard as ordinary Helium) plus two protons (hydrogen)

3He + 3He ---> 4He + 1H + 1H

The net reaction is four hydrogens turn into one Helium plus a two positrons, two neutrinos, and some energy in the form of two gamma rays and high speed motion in the reaction products. Later on in our study of stars we will discuss other types of nuclear fusion.

FIGURE: The proton-proton chain illustrated.

Protons are grey spheres, neutrons black spheres, the positron and

neutrion labeled little black spheres.

FIGURE: The proton-proton chain illustrated.

Protons are grey spheres, neutrons black spheres, the positron and

neutrion labeled little black spheres.

The energy released by these nuclear reactions must make its way out through the Sun from the core to the surface through the process known generically as energy transport. Everyone is instictively aware of this process in terms of heat flowing from hot things to cold things. Specifically this means that energy is moving from regions of high energy to regions of low energy, and temperature is a measure of the average energy. There are three main processes by which energy is transported: Conduction, Convection and Radiation. Let's consider radiation transport first. Radiation transport is heat transport by photons (light). We discussed this briefly when we mentioned that a blackbody at a higher temperature than its surroundings would send out photons to those surroundings and eventually cool down to a temperature equal to the surroundings (if not continually supplied with new heat from some energy source). Radiant heat is the heat you feel coming off from a glowing fire, or the heat you feel while standing in the Sun. In both cases you are absorbing photons coming to you from a source at high temperature. (Note: to protect yourself from radiant heating, you should surround yourself with nonabsorbing, i.e., reflective material. Fire protection suits are made of shiny reflective material.) In the Sun photons are produced in great abundance in the core. These photons then diffuse outward from the center of the Sun to the surface where they escape into space. The photons do not simply stream outward. They are scattered by the dense gas in the Sun and so must work their way outward by a series of random scatterings. The scattering is mostly off of electrons in the gas that have been stripped off of the atoms by the high temperature (an ionized gas known as a plasma). This "resistance" to the free travel of photons through the gas is called opacity, as in opaque. The higher the opacity of the material, the harder it is for light to move through it. (Example: dry air has a very low opacity, and light travels a long way through it before being scattered. Hence you can see a long way through the air. Air containing water droplets, i.e. fog, has a much larger opacity. Light is scattered after a relatively short distance. Imagine the interior of the Sun like a bright, hot, dense fog of plasma.) It takes about 100,000 years for photons to work their way out from the center of the Sun to the surface.

Another heat transport mechanism is conduction. In conduction heat is carried not by photons but by other particles, most often by fast moving electrons. Suppose you have a lump of hot material which has electrons jiggling very rapidly about within that material. You place it next to a cold lump which has slow moving electrons. The fast moving electrons at the interface between these two materials collide with some of colder slow moving material and this collision exchanges energy. The cold material starts to move faster and the hot material a little slower. The electron collisions are trying to even things out. You will be familiar with conduction as a heat transport mechanism because it is what makes some material hard to touch when hot and other materials easy to touch. As you know, metals heat up fast and transmit heat fast. This is because they have lots of electrons that can move easily about within the metal. This is the same reason that the conduct electricity well. Conductors of electricity will also conduct heat. Similarly electrical insulators are often good heat insulators. You may have copper cooking pans but the handles are composed of insulating plastic. Even if a good insulating material is at high temperature you can handle it briefly because the rate of heat exchange between you and that material is very slow. This, by the way, is the secret to walking on glowing coals with your bare feet. But woe unto anyone who walks on glowing metal. Anyway it turns out that the Sun is not a particularly good conductor of heat, at least compared to radiative transport by photons. It is a general rule that things will always use the most efficient and fastest mechanism to transport heat in an attempt to come into temperature equilibrium. So in stars, so long as radiative transport is more efficient than conduction, conduction effects will be negligible. There is a certain class of star where conduction is important but we will delay that discussion until later.

The last major heat transport mechanism is convection. Convection is the physical transport of heat by moving large blobs of hot material from the hot region to the cold region. (If you carry a bucket of hot water along you are physically transporting heat.) In a star convection occurs whenever there is a tendency for hot material to rise and cold material to sink. What is required is that the hot gas is less dense than the cold material and so weighs less than the cold gas. This produces an overturning and circulation in the gas. A good example is the hot air balloon. If you heat the air in a hot air balloon then that air will be less dense than the cold surrounding air that the balloon has displayed. The balloon will be buoyant and will rise up through the atmosphere. This is a particular manifestation of the general rule for the atmosphere that most people are familiar with: hot air rises and cold air sinks. Another example is the phenomenon of boiling water. The hot water at the bottom of the pot rises up to the top and the cold water sinks. In this way heat from the bottom of the pot is transported rapidly up to the top of the pot. Convection is a very efficient means of heat transport. Think how rapidly boiling stops if you remove a pot from the stove (its source of heat).

In the Sun convection is also an important process. Convection becomes important whenever the opacity goes up and the rate of radiative diffusion becomes less. Then heat builds up and the boiling begins. Radiative diffusion is most important in the inner 80% of the Sun, convection in the outer 20%.

All the concepts we have been discussing can be expressed in terms of mathematical equations. Collectively these are known as the equations of stellar structure. These include: (1) The force balance equation for hydrostatic equilibrium. In this equation the gas pressure forces, which are determined by the density and temperature of the gas, are in balance with the gravitational force whose magnitude is determined by the amount of mass inside the star. (2) Energy conservation equation which simply says that the luminosity of the star must be equal to the amount of energy being generated inside the star. Energy generation is determined by the nuclear reactions inside the star and these in turn depend upon the composition (how much hydrogen versus helium for example), the temperature (needs to be high) and the density (also needs to be high). (3) The energy transport equation which says how rapidly energy (heat) can be transported through the star at any one moment. As we have discussed, the rate of energy transport depends on such things as the gas opacity which in turn depends on density and pressure. (4) Mass conservation equation: when you have calculated your way to the surface of the star you had better have a total mass that is equal to the mass of the star. In the case of the Sun this is one solar mass Mo.

Given these equations the astronomer programs a computer and then works towards a solution to the each of the equations subject to the condition that the solar model must have the same luminosity, mass, radius, and surface temperature as the Sun (it wouldn't be a very good model otherwise). The outcome is data giving the temperature, density, pressure, luminosity, etc. at every radius throughout the Sun. Solar models tell us that the Sun generates almost all of its energy in its core, the inner 20% of its radius. Most of the mass is contained in the inner 60% of the radius. The upper 20% consists of the convective zone which serves to transport the inner heat out to the surface of the Sun.

FIGURE: A cross-section of the

Sun. The major sections are the core, where nuclear reactions occur,

the radiative transport zone and the convective zone. The convective

zone reaches out to the photosphere where the photons finally escape

and fly out into space. The photosphere is what we see as the surface

of the Sun.

FIGURE: A cross-section of the

Sun. The major sections are the core, where nuclear reactions occur,

the radiative transport zone and the convective zone. The convective

zone reaches out to the photosphere where the photons finally escape

and fly out into space. The photosphere is what we see as the surface

of the Sun.

Just how certain are astronomers as to the accuracy of their solar models? There are many things that need to be understood accurately in order to get a good model. The biggest challenges are: (1) accurate nuclear reaction rates: how fast do the energy-generating nuclear reactions take place given a certain density, temperature and composition? (2) accurate opacities: how do photons interact with gas at a certain temperature and density? (3) How does convection work in detail? How can you accurately describe regions of hot, overturning, boiling gas? How fast does it transport energy? How fast does it mix? Obviously if you get some of this wrong you can get a wrong model. For example suppose you find that nuclear reactions occur a little faster than you previously thought. This means that you could get the same energy generation from a smaller temperature and density in the core of the Sun. But if the density and temperature are smaller then the size and mass of the solar model would have to change too. The model might have the same mass as the Sun but a larger radius perhaps. Or perhaps it would have the same radius but a more condensed core with a larger percentage of the solar radius in the low pressure, low density convective envelope. We will later be talking about a whole variety of stars that have different masses, luminosities, radii, and internal structures. For all these different stars models have been developed to understand their interiors and their evolution.

Must we solely rely on models to gain understanding of the center of the Sun? As mentioned earlier there is no way to look into the center of the Sun using light. But the neutrinos that are released by the nuclear reactions in the Sun pass right through the Sun and emerge immediately. This is because neutrinos have a very hard time interacting with anything so they stream through matter as if it weren't there. If we could nevertheless detect some of those neutrinos we would be detecting something from the very heart of the Sun. However, the same property that allows the neutrinos to escape from the Sun means that they are very difficult to detect (detecting something means that you made it interact with something to produce a measurable response).

The solar neutrino experiment of astronomer Ray Davis is based upon the fact that neutrinos can interact with a chlorine atom to become an argon atom. The odds of that interaction occurring are very small but if you have a large number of chlorine atoms and a huge number of neutrinos passing through then you might expect to get an event every now and then. The experiment consists of a 100,000 gallon tank of cleaning fluid (which contains chlorine) in a mine in South Dakota. The tank is down in the mine to help shield it from cosmic rays. The mile of rock overhead doesn't represent an obstacle for the neutrinos. From the solar models and the expected rate for the neutrino-chlorine interaction we can predict that the tank will see one argon atom produced every day. About every month the tank is purged of its argon and the total amount of argon is measured. Notice that this is not an easy experiment. We are talking about extracting something like 30 atoms of argon from 100,000 gallons of cleaning fluid. Despite the difficulties neutrinos have been detected over the experiments long run (it has been going since the late 60's). However, the number of neutrinos detected is only one-third the amount predicted. What does this mean? Could there be problems with the equations of stellar structure, or the values of the nuclear reaction rates? Is there something about neutrinos that we don't understand? Are the Sun's nuclear reactions reduced for some reason? Is there new physics we haven't included in our models?

Additional clues are expected from the operation of new neutrino

detectors. These are based not on chlorine but on the element gallium

and are considerably more sensitive than the chlorine experiment which

is only able to detect the most energetic neutrinos. However,

preliminary results are more or less consistent with Davis's result,

namely that there are fewer neutrinos detected than expected from the

theoretical calculations. At the present time physicists believe the

most probably explanation lies in the properties of the neutrinos

rather than those of the Sun. However, the experiments continue to

operate and in the next few years astronomers hope that the situation

will be further clarified.

We now begin to apply some of the things we have learned so far in this course to the study of stars. The understanding of light that we have developed will allow us to classify and characterize stars according to such properties as temperature, brightness, spectral lines, and luminosity. These properties can, in turn, be compared to the Sun as our standard star.

Here are some learning goals for the study of the Stars:

Here are some review questions on Stars:

Photometry

Luminosity, as we have already discussed, is the energy given off per unit time by a star. The concept of brightness is energy per unit time per area. We would like to know a star's intrinsic luminosity, but all we can measure directly is its apparent brightness.

To see the difference between luminosity and brightness, imagine one of those old time street lamps that consists of a large frosted globe. At the center of that globe there is a small lightbulb but you can't see it directly because of the globe. The globe itself gives off plenty of light but the globe itself is not particularly bright. However, if you remove the globe and look at the small filament at the center of the bulb, it appears very bright. The total amount of energy per second is the same (and all coming from the bulb) at 100 Watts. But the brightness depends on how big the surface is that is radiating the light. The same thing is true for the frosted lightbulbs you have at home. You can look at such a bulb directly, since it isn't too intensely bright, but in a bulb with clear glass the filament inside is too bright to look at directly.

Brightness is the energy emitted per time per area. The filament of a lightbulb has some large brightness (it has a very small surface area). When you look at the filament, its image is focused onto your retina in your eye and that image contains the full painful brightness of the filament. As you back away from the bulb the area of your retina that is affected becomes smaller as the apparent size of the bulb shrinks. But the smaller area in your retina will still be hit by light of the same brightness. (This is why looking at the sun is always dangerous. It is intensely bright and the image of the sun on the retina will damage the retina. This is especially dangerous during a partial eclipse only because the total light is sufficiently reduced that you could look at the sun without being overwhelmed. But any bit of the sun that still pokes around the face of the eclipsing moon will still be as bright, and hence as damaging to the portion of the retina upon which the light falls.)

Light bulb frosting shields your eye from the filament and reduces the brightness of any part of the bulb to a lower level, but without reducing the total light given off, i.e., the luminosity. What is happening is that a certain amount of energy is given off by the filament (per second) and that energy spreads out through space. The further out you are in space the less energy per unit area there will be. The frosted bulb intercepts the light and spreads it out uniformly at a lower brightness. Similarly, this explains why a single light bulb will effectively light a small room but not a very big one. The amount of light hitting the walls per unit area in a big room will be very small. This effect of the energy spreading out over space is known as the inverse square law, because the apparent brightness drops off as the inverse distance squared from the light source.

Figure: The inverse square law for light. As

the light from a

source spreads out, filling an every increasing volume of space, the

energy per unit area (brightness) drops of as the area of a sphere,

that is brightness goes as one over the radius squared,

1/r2.

Figure: The inverse square law for light. As

the light from a

source spreads out, filling an every increasing volume of space, the

energy per unit area (brightness) drops of as the area of a sphere,

that is brightness goes as one over the radius squared,

1/r2.

The system of measurement used by astronomers for brightness is somewhat perverse. It it based on the historical stellar brightness measurements of the ancient Greek astronomer Hipparchus. He called the brightest stars first magnitude stars, the ones he felt to be half as bright second magnitude, and so forth down to the faintest visible stars which were sixth magnitude. Notice that this means that magnitudes go backward, i.e., brighter stars have lower numbers. In this way the magnitude system is like golf scores at a major tournament: the lower the number the brighter the star. Hipparchus's system was based upon the response of the observing instrument, namely the human eye. It just so happens that the eye's response is not linear but more logarithmic, hence the magnitude system is a logarithmic scale. In the modern system, 5 magnitudes is defined to be a factor of 100 in brightness, so as to be relatively close to the ancient definition of magnitude.

The brightness of a star as we see it in the heavens is known as its apparent magnitude. The apparent magnitude of a given star is what we would measure with a telescope. This is not an intrinsic property of a star, however since the apparent magnitude depends on how close a star is to Earth, hardly a universal property. For the intrinsic brightness of a star the astronomer works in terms of the absolute magnitude which is the brightness that a star has at a standardized distance of 10 parsecs. Now if one knows the intrinsic or absolute magnitude of a star, then the difference between that and the observed apparent magnitude will tell you how far away that star is. The equation that relates these two magnitudes can be derived from the inverse square law. The quantity (m-M) is referred to the distance modulus because it relates directly to the distance to a star. If a star has a distance modulus of m-M = 0 then it is exactly 10 pc away because the apparent and absolute magnitudes are equal. A positive value of the distance modulus means the apparent magnitude is larger than the absolute, i.e., the star is fainter than it would appear at a distance of 10 pc.

The measurement of magnitudes is called photometry. In principle, photometry is straightforward. One simply measures the amount of light energy captured by a telescope aimed at a star. In practice it can be rather tricky. One needs to know precisely the properties of the telescopes optics: How much light is blocked by the telescope structure, how efficient are the mirrors (or lenses), how good is the detector? Also, the atmosphere absorbs or scatters some light from the star, reducing the amount that can be measured at the telescope. The atmospheric effects depend upon how high the star is above the horizon, altitude of the telescope (thickness of the air), moisture in the air, etc. These sorts of effects can be accounted for in part through the use of standard stars against which the subject star is compared. In addition, these effects are wavelength dependent. For example, some wavelengths of the electromagnetic spectrum are unable to pass through the Earth's atmosphere rendering impossible any ground based observing at those frequencies. Blue light is scattered more by air molecules than red light. The amount of scattering depends on how high the star is above the horizon. An example you are familiar with is the red appearance of the sun when it is low on the horizon; most of the higher frequency light is preferentially scattered away.

Obviously, therefore, one can measure only a small fraction of the complete spectrum of light that a star is producing. Because, in general, astronomers measure only a portion of the wavelength spectrum when they do photometry, they must estimate the amount of light contained in the rest of the spectrum. For example, you will remember from the discussion of blackbody radiation that if you know part of a blackbody curve, you know the whole thing. So a measurement in one part of the spectrum, say, yellow light, allows you to compute what the total luminosity is based on the blackbody curve. Astronomers define a concept called bolometric magnitude which is the magnitude a star would have if all of its light throughout the entire spectrum is included. One must have a bolometric magnitude in order to know the luminosity of a star (that is the total energy per second that it emits).

As mentioned, a ground-based telescope cannot measure the brightness in the entire electromagnetic spectrum. What one does instead is measure a well defined segment of that spectrum. This should be done in a standard way so that other astronomers can make identical measurements at other telescopes. To do this astronomers have created a set of special filters that pass light only within certain wavelength regions. One of the most common set of photometric filters are known as the UBV filters. These are so-called "broad-band" filters because they are transparent to a relatively broad range of light wavelengths. The initials UBV stand for Ultraviolet, Blue and Visual because those frequencies correspond approximately to those regions of the spectrum.

Figure: The approximate spectral range

for the UBV filters. Red light is

700 nm and violet is 400. Hence the V bandpass is centered in the

middle of the visual range, the B is centered on blue, and U is short

of violet, that is in the near ultraviolet. These filters provide

good spectral coverage for the region of the electromagnetic spectrum

that gets through the Earth's atmosphere most easily.

Figure: The approximate spectral range

for the UBV filters. Red light is

700 nm and violet is 400. Hence the V bandpass is centered in the

middle of the visual range, the B is centered on blue, and U is short

of violet, that is in the near ultraviolet. These filters provide

good spectral coverage for the region of the electromagnetic spectrum

that gets through the Earth's atmosphere most easily.

The UBV magnitude numbers give one a concise and standardized way to characterize the brightness and the color (and hence temperature) of a star. Color Indicies are the numbers obtained by subtracting one UBV magnitude from another, say (B-V) or (U-B). Such a number would tell you if the star was emitting more radiation in (say) the Blue band as opposed to the Visual. This would mean that that star is hotter (from the rules for blackbody radiation). But recall also that smaller numbers mean greater brightness in the magnitude system. So a star that had more light in the blue band than in the visual band would have a B-V color index that was less than zero (B less than V). In general, remember that if the color index is negative, the star is brighter in the first of the listed wavebands. If the color index is positive it is brighter in the second of the two. As an example, we have already discussed how the Sun peaks in the yellow-green part of the spectrum so its Visual magnitude is greater than its Blue or Ultraviolet. In fact (B-V) for the sun is +0.62, i.e., it is brighter in V than in B. A star with a smaller B-V would be hotter than the sun; a star with a larger B-V would be cooler. Color indices provide a good way of quickly determining the temperature of a star. We should note that stars are not perfect blackbodies, so astronomers do have to be a bit more exacting, but we will not concern ourselves with those details in this class.

Spectroscopy

Photometry is the measure of the total intensity of light in a certain band of the spectrum. Another astronomical technique is spectroscopy which is the study of the detailed features of a stellar spectrum. To do spectroscopy one must spread the incoming light out into its individual wavelengths. The prisim is an example of a way to do this that everyone will be familiar with. In fact, the first systematic study of stellar spectrum was done by putting a prism at the front end of the telescope (the "Objective Prism Technique"). This spreads out the stellar images so that instead of points of light on the photographic plate one has a line of light, i.e., a spectrum. One can then study the various absorption lines that appear superposed over the continuum. A system of classification was developed, initially using the strength of the Hydrogen "Balmer" lines (see Figures 4-16 and 4-17). In addition to those produced by hydrogen, there are other prominent absorption lines. It was finally realized that the types of lines seen in a star was an indication of the star's temperature. Thus we have the system of spectral type (or spectral class): stars with different spectral types have temperatures and produce different absorption lines.

Figure: Figure 16.5 in the book shows relative line strengths

for various types of absorption lines as a function of Spectral type.

Thus it is possible to obtain a star's surface temperature from the

type of absorption lines seen in its spectrum.